Upgrade & Secure Your Future with DevOps, SRE, DevSecOps, MLOps!

We spend hours scrolling social media and waste money on things we forget, but won’t spend 30 minutes a day earning certifications that can change our lives.

Master in DevOps, SRE, DevSecOps & MLOps by DevOps School!

Learn from Guru Rajesh Kumar and double your salary in just one year.

Introduction

Foundation Model API Platforms are cloud-based services that provide access to large AI models (LLMs, multimodal models, and embeddings) through APIs. Instead of building, training, and hosting models internally, developers can plug into these platforms and immediately use advanced AI capabilities in production applications.

These platforms have become central to modern AI systems because AI is no longer just about generating text. It now powers agentic systems that reason, call tools, retrieve knowledge, and automate workflows across applications. As a result, infrastructure requirements have expanded significantly—especially around latency, cost control, evaluation, governance, and security.

Common use cases include:

- AI copilots for coding, customer support, and business workflows

- Retrieval-augmented enterprise knowledge systems

- Autonomous AI agents performing multi-step tasks

- Multimodal applications combining text, image, audio, and video

- Domain-specific model customization and fine-tuning

- Scalable conversational AI products in SaaS platforms

When choosing a platform, teams evaluate:

- Model quality and reasoning capability

- Latency and performance consistency

- Cost control and pricing transparency

- RAG and knowledge integration support

- Evaluation and testing frameworks

- Guardrails and safety systems

- Observability and debugging tools

- Security, compliance, and governance controls

- Deployment flexibility (cloud, hybrid, self-hosted)

- Ecosystem maturity and integrations

Best for: Enterprises, SaaS companies, and engineering teams building production-grade AI systems.

Not ideal for: Simple experiments or lightweight use cases that don’t require scalable infrastructure.

What’s Changed in Foundation Model API Platforms

- Shift toward agent-native systems with tool execution built in

- Multi-model routing instead of single-model dependency

- Strong focus on evaluation pipelines and regression testing

- RAG-first architecture becoming standard

- Cost-aware inference routing for optimization

- Expansion of multimodal AI capabilities

- Stronger security against prompt injection attacks

- Standardization of function calling and structured outputs

- Observability for tokens, cost, and latency tracking

- Enterprise demand for data residency and private deployment

- Growth of open-source model hosting platforms

- Increased governance and compliance requirements

Quick Buyer Checklist

- Data privacy, retention, and governance policies

- Support for multiple models or BYO model

- RAG and vector database compatibility

- Built-in evaluation and testing tools

- Guardrails against unsafe or injected prompts

- Stable latency under production load

- Transparent cost tracking and controls

- Observability (logs, traces, token metrics)

- Enterprise access control (SSO, RBAC, audit logs)

- Risk of vendor lock-in

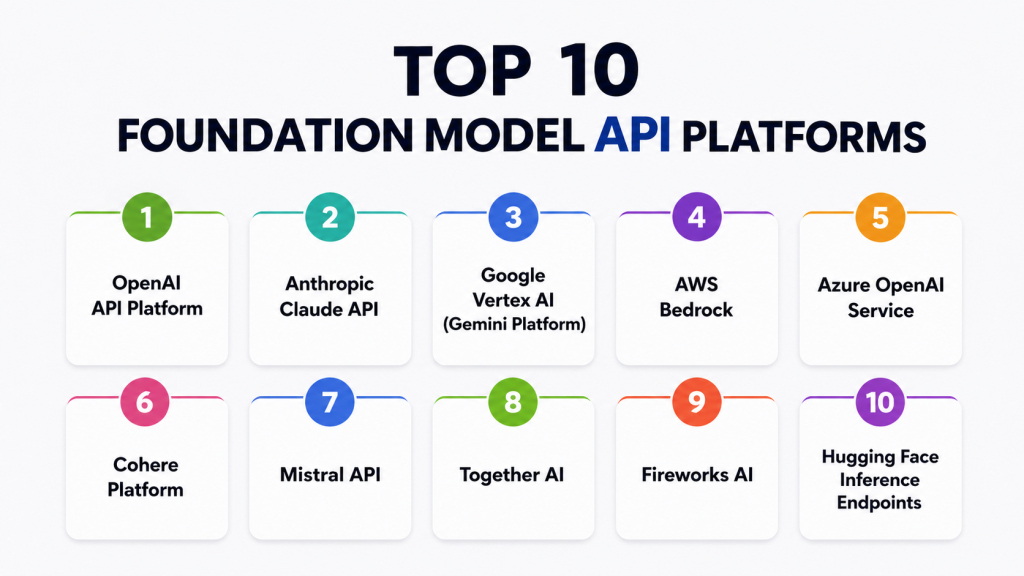

Top 10 Foundation Model API Platforms

1 — OpenAI API Platform

Short description: A leading AI API platform offering high-quality general-purpose and multimodal models widely used in production AI applications.

One-line verdict: Best for high-quality general-purpose AI models and copilots.

Standout Capabilities

- Advanced reasoning models

- Multimodal capabilities

- Tool/function calling for agents

- Streaming responses

- Fine-tuning support (select models)

- Structured outputs

- Strong ecosystem support

AI-Specific Depth

- Model support: Proprietary

- RAG integration: External

- Evaluation: Basic tooling

- Guardrails: Moderation system

- Observability: Usage and token metrics

Pros

- High model quality

- Strong developer ecosystem

- Reliable performance

Cons

- Closed ecosystem

- Costs increase with scale

Security & Compliance

- Enterprise controls available

- Certifications: Not publicly stated

Deployment

- Cloud API only

Integrations

- Strong SDK and third-party ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- AI copilots

- SaaS AI features

- Agent systems

2 — Anthropic Claude API

Short description: A safety-focused AI platform designed for long-context reasoning, high-quality writing, and enterprise-grade alignment.

One-line verdict: Best for long-context reasoning and safe enterprise workflows.

Standout Capabilities

- Long context processing

- Strong summarization

- High-quality reasoning

- Safety-first alignment design

- Tool use support

AI-Specific Depth

- Model support: Proprietary

- RAG integration: External

- Evaluation: Limited

- Guardrails: Strong alignment system

- Observability: Basic metrics

Pros

- Excellent long-document handling

- High safety reliability

- Strong writing quality

Cons

- Smaller ecosystem

- Fewer native developer tools

Security & Compliance

- Enterprise features available

Deployment

- Cloud API

Integrations

- Growing enterprise ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Legal/document workflows

- Research assistants

- Knowledge-heavy applications

3 — Google Vertex AI (Gemini Platform)

Short description: Google Cloud’s AI platform providing Gemini models and full enterprise-grade machine learning infrastructure.

One-line verdict: Best for multimodal enterprise AI and ML workflows.

Standout Capabilities

- Multimodal AI support

- Enterprise ML pipelines

- Data analytics integration

- Scalable infrastructure

AI-Specific Depth

- Model support: Multi-model ecosystem

- RAG integration: Native cloud integration

- Evaluation: MLOps tooling

- Guardrails: Policy-based safety controls

- Observability: Cloud monitoring

Pros

- Strong enterprise scalability

- Multimodal capabilities

- Deep cloud integration

Cons

- Complex configuration

Security & Compliance

- Enterprise-grade controls

Deployment

- Cloud-based

Integrations

- Google Cloud ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Enterprise AI systems

- Multimodal applications

4 — AWS Bedrock

Short description: A unified AWS platform offering access to multiple foundation models through a single enterprise API layer.

One-line verdict: Best for enterprise multi-model flexibility inside AWS.

Standout Capabilities

- Multi-model access

- AWS-native integration

- Guardrails system

- Scalable deployment

AI-Specific Depth

- Model support: Multi-provider

- RAG integration: AWS ecosystem

- Evaluation: Limited

- Guardrails: Built-in safety layer

- Observability: CloudWatch integration

Pros

- Strong enterprise adoption

- Flexible model selection

Cons

- Complex setup

Security & Compliance

- Strong AWS compliance

Deployment

- AWS cloud

Integrations

- Full AWS ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Enterprise AI infrastructure

- Multi-model systems

5 — Azure OpenAI Service

Short description: Microsoft’s enterprise AI platform integrating OpenAI models into Azure with strong governance and hybrid deployment options.

One-line verdict: Best for Microsoft ecosystem enterprises.

Standout Capabilities

- OpenAI models on Azure

- Enterprise governance tools

- Private networking

- Microsoft ecosystem integration

AI-Specific Depth

- Model support: OpenAI models

- RAG integration: Azure AI Search

- Evaluation: Limited

- Guardrails: Content safety system

- Observability: Azure monitoring

Pros

- Strong enterprise security

- Microsoft integration

Cons

- Platform lock-in

Security & Compliance

- Enterprise-grade

Deployment

- Cloud + hybrid

Integrations

- Microsoft ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Enterprise internal tools

- Regulated industries

6 — Cohere Platform

Short description: An enterprise AI platform focused on embeddings, retrieval-augmented generation, and NLP for search systems.

One-line verdict: Best for enterprise NLP and retrieval systems.

Standout Capabilities

- Strong embeddings

- RAG-first architecture

- Multilingual NLP support

- Enterprise search optimization

AI-Specific Depth

- Model support: Proprietary

- RAG integration: Strong

- Evaluation: Limited

- Guardrails: Basic

Pros

- Strong retrieval performance

- Efficient inference

Cons

- Smaller ecosystem

Security & Compliance

- Enterprise features available

Deployment

- Cloud API

Integrations

- Vector DB support

Pricing

Usage-based

Best-Fit Scenarios

- Enterprise search

- RAG applications

7 — Mistral API

Short description: A modern AI platform offering efficient open-weight and proprietary models optimized for speed and cost.

One-line verdict: Best for efficient open-weight models with flexible deployment.

Standout Capabilities

- Open-weight models

- Fast inference

- Cost-efficient design

- Flexible deployment options

AI-Specific Depth

- Model support: Open + proprietary

- RAG integration: External

- Evaluation: Limited

- Guardrails: Minimal

Pros

- High performance

- Cost efficient

Cons

- Limited enterprise tooling

Security & Compliance

- Not publicly stated

Deployment

- Cloud + flexible options

Integrations

- Growing ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Developer applications

- Cost-sensitive AI systems

8 — Together AI

Short description: A developer-focused platform for hosting and fine-tuning open-source models at scale with GPU-optimized infrastructure.

One-line verdict: Best for open-source model hosting and fine-tuning.

Standout Capabilities

- Open-source model hosting

- Fine-tuning pipelines

- High throughput inference

- GPU optimization

AI-Specific Depth

- Model support: Open-source

- RAG integration: External

- Evaluation: Limited

- Guardrails: Minimal

Pros

- Strong OSS ecosystem

- Flexible experimentation

Cons

- Limited governance

Security & Compliance

- Not publicly stated

Deployment

- Cloud API

Integrations

- Hugging Face ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Research teams

- OSS applications

9 — Fireworks AI

Short description: A high-performance inference platform optimized for ultra-low latency and production-scale AI workloads.

One-line verdict: Best for low-latency production inference.

Standout Capabilities

- Ultra-low latency inference

- Model optimization

- Production scaling

- Cost efficiency focus

AI-Specific Depth

- Model support: Multi-model

- RAG integration: External

- Evaluation: Limited

- Guardrails: Minimal

Pros

- Extremely fast inference

- Efficient scaling

Cons

- Narrow platform scope

Security & Compliance

- Not publicly stated

Deployment

- Cloud API

Integrations

- API-first ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Real-time AI applications

- High-traffic systems

10 — Hugging Face Inference Endpoints

Short description: A leading open-source AI deployment platform offering managed inference endpoints for thousands of models.

One-line verdict: Best for open-source model deployment at scale.

Standout Capabilities

- Massive model ecosystem

- Easy deployment of OSS models

- Scalable endpoints

- Strong community support

AI-Specific Depth

- Model support: Open-source

- RAG integration: External

- Evaluation: External tools

- Guardrails: Not built-in

Pros

- Huge model variety

- Strong OSS ecosystem

Cons

- Requires tuning for production

Security & Compliance

- Not publicly stated

Deployment

- Cloud endpoints

Integrations

- Hugging Face ecosystem

Pricing

Usage-based

Best-Fit Scenarios

- Custom AI pipelines

- OSS deployments

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Rating |

|---|---|---|---|---|---|---|

| OpenAI | General AI apps | Cloud | Proprietary | Quality | Cost scaling | N/A |

| Anthropic | Long context | Cloud | Proprietary | Safety | Ecosystem size | N/A |

| Vertex AI | Enterprise AI | Cloud | Multi-model | Multimodal | Complexity | N/A |

| AWS Bedrock | AWS enterprises | Cloud | Multi-model | AWS integration | Setup | N/A |

| Azure OpenAI | Microsoft orgs | Cloud | OpenAI models | Governance | Lock-in | N/A |

| Cohere | NLP systems | Cloud | Proprietary | Retrieval | Ecosystem | N/A |

| Mistral | Efficient models | Cloud | Open + proprietary | Speed | Tooling gaps | N/A |

| Together AI | OSS hosting | Cloud | Open-source | Flexibility | Governance | N/A |

| Fireworks AI | Low latency | Cloud | Multi-model | Speed | Narrow scope | N/A |

| Hugging Face | OSS deployment | Cloud | Open-source | Variety | Tuning effort | N/A |

Scoring & Evaluation

Scoring reflects comparative performance across typical production use cases.

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Total |

|---|---|---|---|---|---|---|---|---|---|

| OpenAI | 10 | 9 | 8 | 9 | 9 | 7 | 8 | 9 | 8.6 |

| Anthropic | 9 | 9 | 9 | 7 | 8 | 7 | 8 | 8 | 8.3 |

| Vertex AI | 9 | 9 | 8 | 10 | 7 | 8 | 9 | 8 | 8.6 |

| AWS Bedrock | 9 | 9 | 8 | 10 | 7 | 8 | 9 | 9 | 8.7 |

| Azure OpenAI | 9 | 9 | 9 | 10 | 7 | 8 | 10 | 9 | 8.9 |

| Cohere | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Mistral | 8 | 8 | 6 | 7 | 8 | 9 | 6 | 7 | 7.6 |

| Together AI | 8 | 8 | 6 | 7 | 8 | 9 | 6 | 7 | 7.6 |

| Fireworks | 8 | 9 | 6 | 7 | 8 | 10 | 6 | 7 | 7.9 |

| Hugging Face | 8 | 8 | 6 | 9 | 8 | 8 | 6 | 8 | 7.8 |

Top 3 Enterprise

- AWS Bedrock

- Azure OpenAI

- Vertex AI

Top 3 SMB

- OpenAI

- Anthropic

- Cohere

Top 3 Developers

- OpenAI

- Mistral

- Hugging Face

Which Platform Is Right for You?

Solo / Freelancer

OpenAI or Anthropic for simplicity and quality.

SMB

OpenAI, Cohere, Mistral for balance of cost and capability.

Mid-Market

AWS Bedrock, Azure OpenAI, Vertex AI for scalability.

Enterprise

Azure OpenAI, AWS Bedrock, Vertex AI for governance and compliance.

Regulated Industries

Azure OpenAI and AWS Bedrock for control and compliance.

Budget vs Premium

- Budget: Mistral, Hugging Face, Together AI

- Premium: OpenAI, Anthropic, Vertex AI

Build vs Buy

- Build: Hugging Face, Together AI

- Buy: OpenAI, Azure OpenAI, AWS Bedrock

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Define use case

- Run pilots

- Set success metrics

- Log outputs

60 Days

- Add evaluation framework

- Implement guardrails

- Control access

- Optimize cost

90 Days

- Deploy monitoring

- Add model routing

- Implement governance

- Scale production

Common Mistakes

- No evaluation system

- Ignoring prompt injection risks

- Cost overruns from token usage

- Lack of observability

- No fallback models

- Weak access control

- Poor RAG implementation

- Vendor lock-in issues

- No version control for prompts

- Over-automation without review

FAQs

What are foundation model API platforms?

They provide access to large AI models via APIs for building applications.

Do I need multiple providers?

Often yes, for redundancy and cost optimization.

Can I switch providers easily?

Not always due to API differences.

Is my data used for training?

Depends on provider and configuration.

What is RAG?

Retrieval-Augmented Generation combines LLMs with external knowledge.

What is BYO model?

Using your own hosted model with the platform.

Are these platforms secure?

Most offer enterprise controls, but features vary.

What is model routing?

Automatically selecting the best model per task.

Do I need evaluation tools?

Yes, for production reliability.

What are guardrails?

Safety systems that prevent harmful outputs.

Which platform is cheapest?

Depends on usage; open-source hosting is often cheaper.

Can I self-host models?

Yes via open-source platforms.

Conclusion

Foundation Model API platforms form the backbone of modern AI systems, powering everything from copilots to autonomous agent workflows. Each platform offers a distinct trade-off between model quality, cost efficiency, enterprise governance, open-source flexibility, and performance optimization. There is no universal best choice—the right platform depends entirely on your product requirements, infrastructure constraints, and long-term AI strategy. The most successful teams evaluate these platforms not based on popularity, but on reliability, scalability, security, and how well they support real production workload